What is Storm

Apache Storm is a distributed realtime computation system which process unbounded streams of data, doing for realtime processing. Storm is scalable, fault-tolerant, guarantees your data will be processed, and is easy to set up and operate. Realtime analytics, online machine learning, continuous computation, distributed RPC, ETL are namely few primary use cases that can be addressed via Storm.

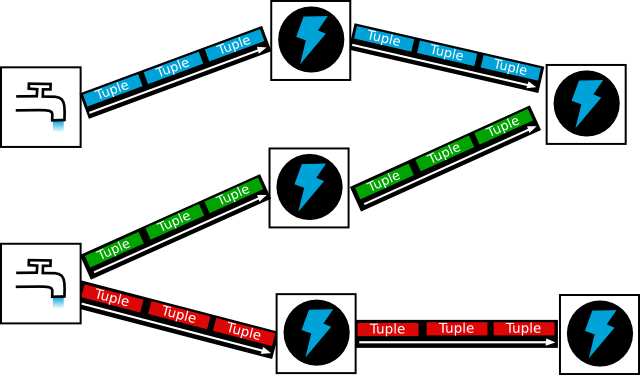

STORMs logical overview

At the highest level, Storm is comprised of topologies. A topology is a graph of computations — each node contains processing logic and each path between nodes indicates how data should be passed between nodes. A topology comprises of network of streams which is unbounded sequence of tuples. In short:

- Tuple: Ordered list of elements. Eg: (“orange”,”tweet-123″,..,..,..).

Valid type: String, Integer, byte-array, or you can also define your own serializers so that custom types can be used natively within tuples. - Streams: Unbounded sequence of tuples: Tuple1, Tuple2, Tuple3

Storm uses SPOUTS, which takes an continuous input stream from a source viz. twitter and pass this chunk of data (or emit this stream) to another component called as BOLTS to consume. An emitted tuple can go from a “Spout” to “Bolt” or/and from “Bolt” to another “Bolt”. A Storm topology may have one or more Spouts and Bolts. As an implementer/programmer, multiple spouts/bolts can be configured as per the business logic.

(The above image is from Apache Storm’s website)

STORMs Architecturial overview

Storm run in a clustered environment. Similar to Hadoop, it has two types of nodes:

- Master node: This node runs a daemon process called ‘Nimbus’. Nimbus is responsible for distributing code or the toplogy (spouts+bolts) across the cluster, assigning tasks to worker nodes, and monitoring the success and failure of units of work.

- Worker node: Worker node has a node called as daemon process called the ‘Supervisor’. A Supervisor is responsible to starts and stops worker processes. Each worker process executes a subset of a topology, so that the execution of a topology is spread across a different worker processes that are running on cluster.

Storm leverages ZooKeeper to maintain the communication between Nimbus and Supervisor. Nimbus communicates to Supervisor by passing messages to Zookeeper. Zookeeper maintain the complete state of toplogy so that Nimbus and Supervisors to be fail-fast and stateless.

Storm mode

1. Local mode: In local mode, Storm executes topologies completely in-process by simulating worker nodes using threads.

2. Distributed mode: Runs across the cluster of machines.